Webscraper package python1/27/2024 For this step, you’ll want to inspect the source of your webpage (or open the Developer Tools Panel).

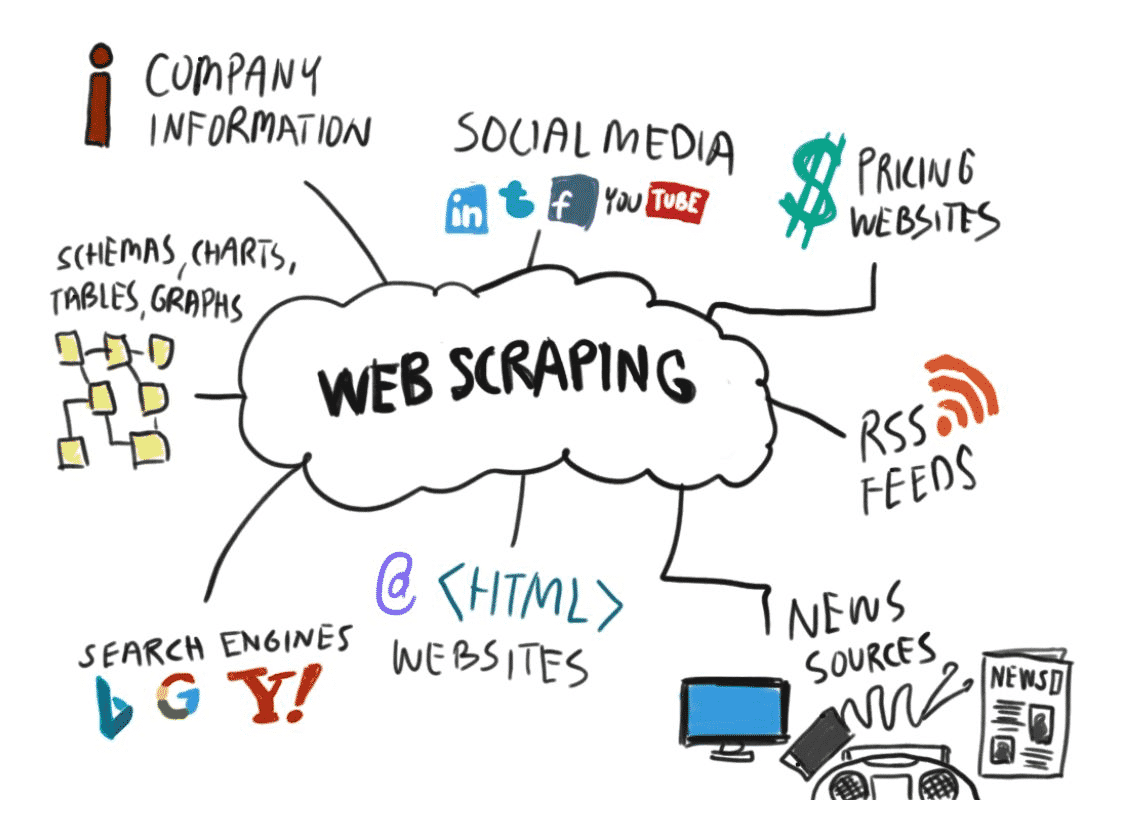

Once you’ve selected your URLs, you’ll want to figure out what HTML tags or attributes your desired data will be located under. Step 2: Find the HTML content that you want to scrape Step 1: Select the URLs you want to scrapeįor both Madewell and NET-A-PORTER, you’ll want to grab the target URL from their webpage for women’s jeans. Let’s say we want to compare the prices of women’s jeans on Madewell and NET-A-PORTER to see who has the better price.įor this tutorial, we’ll build a web scraper to help us compare the average prices of products offered by two similar online fashion retailers. However, for the purposes of this tutorial, we’ll be focusing on just three: Beautiful Soup 4 (BS4), Selenium, and the statistics.py module. Pandas: Not typically used for scraping, but useful for data analysis, manipulation, and storage.Urllib: A package used for opening URLs.LXML: A tool used for processing XML and HTML in the Python language.Python Requests: The requests library allows users to send HTTP/1.1 requests without needing to attach query strings to URLs or form-encode POST data.Selenium: A suite of open-source automation tools that provides an API to write acceptance or functional tests.Scrapy: A high-speed, open-source web crawling and scraping framework.MechanicalSoup: Another Python library used to automate interactions on websites (like submitting forms).Beautiful Soup: A popular Python library used to extract data from HTML and XML files.These are some of the most popular tools and libraries used to scrape the web using Python. What tools and libraries are used to scrape the web? Data storage: The last stage of web scraping involves storing the transformed data in a JSON, XML, or CSV file.Data parsing and transformation: This next step involves transforming the collected dataset into a format that can be used for further analysis (like a spreadsheet or JSON file).Data collection: In this step, data is collected from webpages (typically with a web crawler).Search Engine Optimization (SEO) monitoring.Fetching images and product descriptions.Here are some other real-world applications of web scraping: Web scraping is also great for building bots, automating complicated searches, and tracking the prices of goods and services. This data can be transferred to a spreadsheet or JSON file for easy data analysis, or it can be used to create an application programming interface (API). Web developers, digital marketers, data scientists, and journalists regularly use web scraping to collect publicly available data. Web scraping has a wide variety of applications.

Step 4: Build your web scraper in Python.Step 3: Choose your tools and libraries.Step 2: Find the HTML content you want to scrape.Step 1: Select the URLs you want to scrape.Then, we’ll take a closer look at some of the more popular Python tools and libraries used for web scraping before moving on to a quick step-by-step tutorial for building your very own web scraper. We’ll introduce you to some basic principles and applications of web scraping. Python libraries like BeautifulSoup and packages like Selenium have made it incredibly easy to get started with your own web scraping project. You may be wondering why we chose Python for this tutorial, and the short answer is that Python is considered one of the best programming languages to use for web scraping. So, why not build a web scraper to do the detective work for you? Automated web scraping is a great way to collect relevant data across many webpages in a relatively short amount of time. Crawling through this massive web of information on your own would take a superhuman amount of effort.

The internet is arguably the most abundant data source that you can access today.

0 Comments

Leave a Reply.AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed